Login to continue

Don't have an account? Register here.

- YouTube Channel Audit

Before we discuss about Robots.txt file validator, let's first understand what a Robots.txt file is.

A Robots.txt file is a text file that includes instructions to guide Search Engine Robots about the pages which are not to be crawled in the website. It is the first file that is visited by the Search Engine Robots to find instructions about the crawling process.

How does Lxr 'Robots.txt Validator' help?

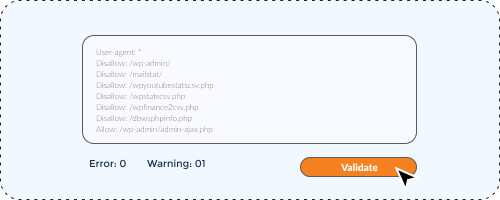

The Robots.txt validator helps in identifying all errors in the Robots.txt file including mistyped words, syntax & logical errors.

As iterated earlier, Robots.txt is an important file from Search Engine perspective, and getting the correct Robots.txt file is a prerequisite for every website.

-

The LXR Robots.txt file serves two fold purposes.

-

It can validate an existing Robots.txt file.

-

The webmasters can paste the content of their Robots.txt file before uploading in website root.

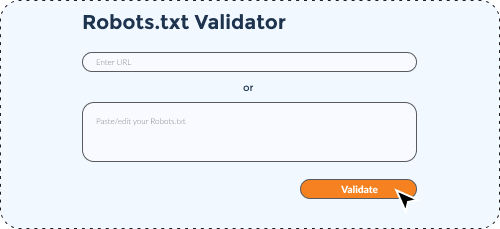

In order to fetch and assess the effectiveness of your site’s Robots.txt tool, all you need to do is provide your site’s homepage URL. The tool would instantaneously fetch the existing Robots.txt file and share the findings.

Alternatively, you may simply access the Robots.txt file of your website; you can simply copy and paste the full details in the input field.

In either case, just press the ’Validate’ button. The tool would highlight all syntax and logical errors that you may like to fix.

In the same input field you can rectify the possible errors. Furthermore, if in case you would like to omit any URL from getting crawled and/or would like to allow certain file to be crawled from a blocked directory, you can do so with the help of the tool and in the process prepare the final file that you would like to update in the root directory of your site.

As mentioned above, the tool allows you to make all necessary amendments in the file -

you may copy the final changes and paste it to your original Robots file before updating the same at the root directory.

Tool FAQs

4.4

15

Related tools

Related tools

Related tools

Login to continue

Don't have an account? Register here.

LOCATIONS

Global Headquarters

3 Independence Way, Suite #203,

Princeton, NJ 08540

Call: 609.356.5112

Asia Pacific Office

601, Jain Sadhguru Capital Park,

Image Garden Road,

Madhapur, Hyderabad-500081,

Telangana, India

Continue with Google

Continue with Google

Continue with LinkedIn

Continue with LinkedIn